Unlocking the Power of Discord: A DevRel Guide to Boosting Community Engagement

Engaging community members on Discord can sometimes feel like solving a complex puzzle.

You may have noticed a trend: new members burst onto the scene with enthusiasm, and there’s a surge of activity when a specific technical question pops up. But in between these moments, regular members often seem to hang back.

Acknowledging and understanding this pattern of engagement is a fundamental step towards morphing your Discord server from a simple question-and-answer hub to a lively, continuously learning, and interactive space.

Yet, for a DevRel community to genuinely flourish on Discord, we need to evolve this platform from being merely functional into an ecosystem that fosters growth, shared learning, and encourages vulnerability.

Identifying Your Audience and Delivering Quick Wins

A key component of transforming your Discord community involves knowing your audience inside out.

Understand their needs, wants, and the obstacles they face. Once you’ve done that, the next step is to provide quick wins that address these issues.

Quick wins can be anything that provides immediate value to your community members, solving a problem they have, or giving them new insights or tools.

Consider this: in a DevRel community focused on cloud development, if members often find it challenging to choose a suitable tech stack, a quick win could be a curated list of popular tech stacks within the community. Couple this with reviews and user experiences, and you’ve got a helpful resource that not only solves a problem but also stimulates conversation.

The key to making quick wins work for your community is consistency.

By regularly providing quick wins, you subtly shift members’ perception of your community from being just another Q&A forum to a learning hub where they can engage in exciting discussions and acquire new knowledge.

Engaging with the Community

The next piece of the puzzle is building awesome community engagement.

To engage with your community effectively, start by understanding your audience through data. Identify the conversations that generate the most replies and likes and try to pinpoint why these topics resonate with your members.

With this understanding, focus on initiating conversations and producing content that strikes a chord with your community.

An excellent strategy is to create one piece of content per week and start at least two conversation starters. This might seem simple, but it is incredibly effective. (Curious what devs are interested in? See the data.)

When members visit your community, they should encounter engaging discussions, relevant content, and intriguing finds, not just a series of question-and-answer exchanges.

If you keep up a steady stream of content and conversation starters, you provide more reasons for members to engage and come back regularly.

External Pulls: Drawing People Back In

Another important factor to consider is the role of external pulls – the things you do outside of Discord to draw people back in.

For instance, you might send an email update about a new piece of content, or post on social media about a conversation that’s gaining momentum in your Discord community.

If members have been dormant in your Discord channel, these external pulls can serve as reminders, nudging them back towards engagement.

The Journey Towards a Thriving Discord Community

Remember, progress trumps perfection.

Start with small steps like initiating a couple of conversations each week, and observe what resonates with your community. As you learn more about your audience and refine your strategies, you’ll find the puzzle of engagement becoming less complex.

With time, you’ll spot trends in the content and discussions that often stir up interaction. This will help you create more engaging DevRel content and spark lively discussions on Discord.

Creating a thriving community on Discord doesn’t happen overnight. It requires consistent effort, creativity, and a willingness to listen and adapt to your audience’s needs. But the reward—a vibrant, engaged community—is well worth the journey.

As always, I’m here to help. Reach out if you have any questions or need guidance on boosting your Discord community’s engagement.

Together, we can unlock the true potential of your DevRel efforts on Discord.

Curious to get an awesome job in DevRel? Read my guide.

Decoding DevRel: Exploring Job Roles in Developer Relations

DevRel, short for Developer Relations, is a critical part of many tech companies. It refers to the strategic efforts aimed at engaging and nurturing relationships with the developer community.

This involves creating a conducive environment for developers, facilitating productive communication, and promoting the company’s products or services among the community.

But what roles form the crux of DevRel? Let’s dig in.

Key DevRel Jobs

DevRel usually consists of three primary roles: Director of Developer Relations, Community Manager, and Evangelist.

Each plays a unique part in establishing and cultivating relationships between the company and its developer community.

1. Director of Developer Relations

The Director of Developer Relations is often a senior role within companies that are further along in their growth.

This individual is responsible for overseeing the DevRel strategy, ensuring that cross-team collaboration occurs effectively.

They usually interact with different departments like marketing, sales, product, and engineering, making sure these teams align with stakeholder requirements.

The Director of Developer Relations role isn’t typically the first hire in a nascent company. Rather, this role becomes crucial as the company matures and requires a leader to synchronize the DevRel activities with the broader company strategy.

2. Community Manager

The Community Manager is akin to the backbone of the developer community.

Their role involves building, nurturing, and growing the community by creating engaging content, managing community channels, and organizing events.

They are also responsible for improving the onboarding process for new members and creating engaging experiences for existing ones.

The Community Manager’s role becomes significant in the early stages of community-building, with the primary goal being attracting and retaining members.

This role is often the starting point in setting up a solid developer community structure.

3. Evangelist

The Evangelist, the voice of the company, is primarily tasked with creating awareness and excitement around the company’s products or services.

Their responsibilities include delivering tech demos, advocating for the company, performing outreach, and creating compelling content.

They are often the face of the company at conferences, webinars, and other public forums. Once a robust community structure is in place, the Evangelist plays a crucial role in driving traffic and attention to it.

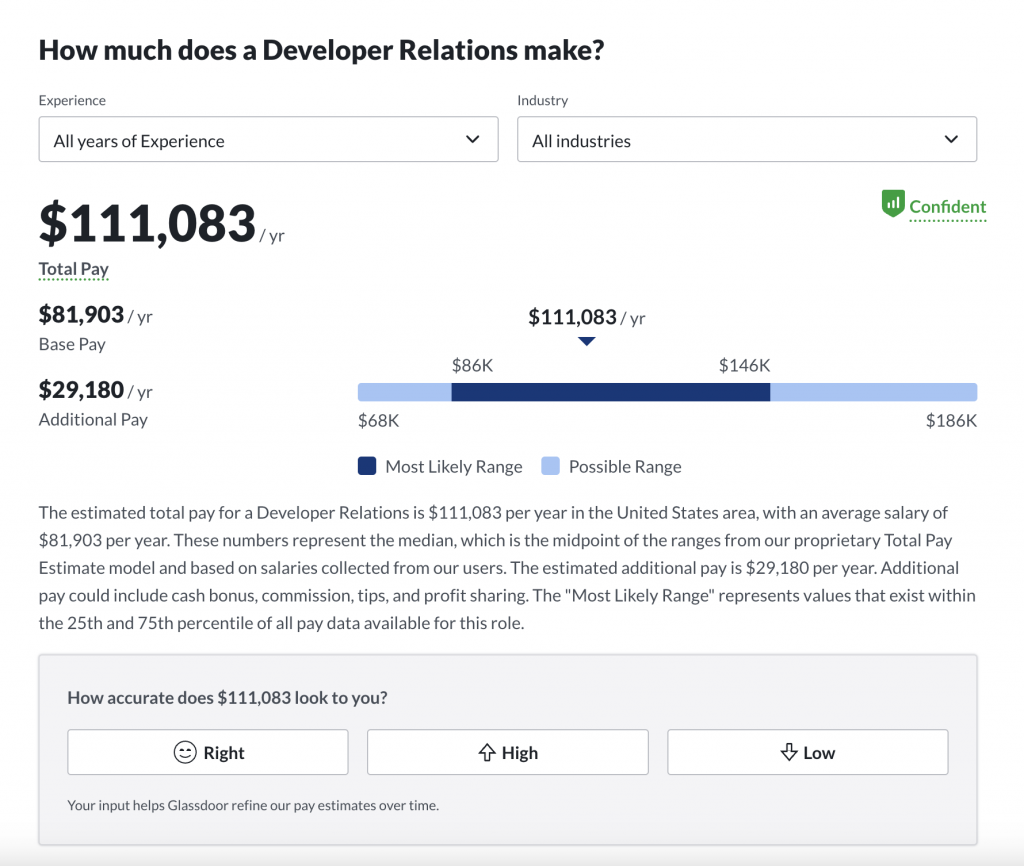

DevRel Salaries

DevRel salaries varyies widely depending on the specific role, company size, and geographical location.

Be sure to check out this link from Glassdoor which provides some fairly current salary information. This is the salary for DevRel at the time of writing:

Who do you hire first?

For a successful DevRel strategy, I usually recommend companies start out with a community management role. This role establishes the necessary foundations for the community, ensuring that there’s a structured delivery system in place.

Following this, an evangelist should be brought in to generate excitement and awareness around the community. Ideally, when the community structure is stable and operational, the evangelist drives traffic towards it.

Lastly, as the company grows and matures, a Director of Developer Relations can be introduced. This person coordinates the activities of the community manager and evangelist, aligning these with the company’s overarching strategy.

It’s crucial to remember that while these roles have distinct responsibilities, there is often overlap, particularly in smaller teams or startup environments. Flexibility is a must, with the success of DevRel lying in the balance and synchronization of all these roles.

What about a DevRel Engineer?

A noteworthy role in the realm of DevRel is the DevRel Engineer. This role, while not as prominent as the aforementioned ones, is increasingly becoming essential. But what is a DevRel Engineer?

A DevRel Engineer is a hybrid role, combining aspects of software engineering and DevRel.

These folks are technically skilled, often with a strong background in software development, and carry the responsibility of representing the developers within the company.

Their role includes writing code, building demos, providing developer feedback to the product team, and communicating technical aspects of the product to the developer community.

Their position serves as a bridge between the company’s technical team and the developer community, which allows them to understand both parties’ needs and communicate effectively.

Stay focused on value

However you hire, ensure you focus on these key elements as you start to build out your developer community:

- Community First: Always prioritize the community. The developer community is not just a customer base; it’s a group of individuals who can help improve and promote your product. Listen to their needs, consider their feedback, and communicate openly with them.

- Authenticity: Authenticity is key in DevRel. The community values genuine interactions and honest feedback. Therefore, maintaining authenticity in your communication helps build trust and fosters a strong relationship.

- Value Delivery: Ensure that you’re not just promoting your product but providing value to the community. This could be through educational content, insightful discussions, or useful tools. Delivering value helps maintain engagement and loyalty within the community.

- Collaboration: Collaboration is a vital aspect of DevRel. Whether it’s collaborating with the community, with other teams in your company, or even with other companies, successful DevRel involves working together to achieve shared goals.

DevRel is a dynamic, multi-faceted field, providing a variety of job roles, each playing a unique part in nurturing relationships with the developer community.

From community managers and evangelists to the director of developer relations and DevRel engineers, these roles each contribute significantly to a successful DevRel strategy.

Keep in mind the importance of authentic communication, delivering value, and fostering a collaborative environment for a thriving developer relations program.

What is Developer Relations (DevRel)? A Complete Guide.

Developer Relations, commonly known as DevRel, is a rapidly growing field within the tech industry that focuses on fostering relationships between companies and their developer communities.

DevRel professionals bridge the gap between companies and developers by providing technical guidance, support, and resources. This allows companies to better understand the needs and perspectives of their developer communities, which ultimately drives the growth and adoption of their products and services.

Here is a video guide too that might help:

What are the roles and responsibilities in devrel?

DevRel professionals come from various backgrounds, often with experience in software development, technical writing, community management, and marketing.

The primary roles within DevRel include Developer Advocates, Developer Evangelists, and Community Managers.

Their responsibilities encompass a wide range of activities such as:

Technical content creation

DevRel professionals create technical resources such as tutorials, blog posts, and documentation to help developers understand, use, and integrate a company’s products and services effectively.

Public speaking and workshops

Developer Advocates and Evangelists often attend and speak at conferences, meetups, and webinars to share knowledge, engage with the community, and showcase the company’s technology.

Community engagement

DevRel professionals facilitate communication between developers and the company by engaging with the community through forums, social media, and other channels. They gather feedback, address concerns, and provide support to enhance the user experience.

Internal advocacy

A key function of DevRel is to represent the developer’s voice within the company. DevRel professionals collaborate with product, engineering, and marketing teams to ensure that the company’s products and services align with the needs and expectations of the developer community.

Developer programs and initiatives

DevRel teams often create and manage developer programs, hackathons, and other initiatives to incentivize and recognize community contributions, drive adoption, and strengthen the developer ecosystem.

Why is devrel so important?

Developer Relations offers numerous benefits for companies.

By fostering relationships between the company and its developer community, DevRel can significantly impact the growth, adoption, and success of the company’s products and services.

Here are some key benefits of DevRel for a company:

Improved product adoption

DevRel professionals create resources, provide support, and share knowledge that enables developers to understand and use a company’s products and services more effectively. This leads to increased product adoption, usage, and market share.

Enhanced user experience

DevRel teams gather feedback and insights from the developer community, which helps the company iterate on its products and services to better meet the needs and expectations of developers. This leads to an enhanced user experience and higher customer satisfaction.

Stronger brand loyalty

By fostering meaningful relationships with developers and providing valuable resources, DevRel teams can create a sense of trust and loyalty towards the company’s brand. This can lead to long-term commitment from the developer community and promote advocacy for the company’s products and services.

Faster innovation

An engaged developer community can contribute valuable ideas, features, and improvements to a company’s products. By encouraging collaboration and innovation, DevRel can help the company stay competitive and agile in the fast-paced tech industry.

Expanded developer ecosystem

DevRel initiatives like hackathons, developer programs, and open-source contributions can attract more developers to the company’s ecosystem. This can lead to the development of third-party tools, integrations, and add-ons that enhance the functionality and reach of the company’s products.

Valuable feedback

DevRel professionals serve as a bridge between the company and the developer community, ensuring that feedback from developers is taken into account when making product decisions. This can help the company prioritize features and improvements that truly matter to the users.

Increased visibility

DevRel professionals often attend and speak at conferences, meetups, and other industry events, showcasing the company’s technology and sharing expertise. This increases brand awareness and visibility within the developer community and the broader tech industry.

Cost-effective marketing

DevRel initiatives can provide a cost-effective means of marketing and promoting the company’s products and services. By focusing on creating valuable content and resources, DevRel teams can organically attract and engage with the developer community, reducing the need for traditional advertising and marketing campaigns.

Internal advocacy

DevRel professionals act as the voice of the developer within the company, ensuring that product, engineering, and marketing teams understand and address the needs and concerns of the developer community. This leads to better-aligned products and services that cater to the actual needs of the users.

What is a typical devrel salary?

The typical salary of a DevRel engineer can vary widely depending on factors such as experience, location, company size, and industry. In general, DevRel professionals can expect to earn a competitive salary compared to other software engineering roles.

Over the course of my 22+ years helping hundreds of companies build technical communities, the average base salary for a DevRel engineer in the United States is around $90,000 to $130,000 per year.

However, more experienced DevRel engineers, particularly those working for large tech companies, could earn significantly more, with salaries exceeding $150,000 or rising above $400,000 in some cases. Some of the larger companies even get to $1m+ for notable devrel leaders.

It is essential to note that salaries can also vary significantly by country or region.

In addition to the base salary, DevRel engineers may also be eligible for bonuses, stock options, or other benefits, which can further increase their total compensation.

To get a more accurate and up-to-date picture of DevRel engineer salaries, you can consult various resources such as Glassdoor, Payscale, or other job and salary websites. You can also network with DevRel professionals in your area to gain more insight into the earning potential within this field.

How to measure success in developer relations?

Measuring success in Developer Relations can be challenging due to the diverse range of activities and goals associated with the field.

However, there are several key performance indicators (KPIs) and metrics that can help evaluate the effectiveness of DevRel efforts. Here are some common ways to measure success in Developer Relations:

- Community growth: Track the number of developers joining your community, participating in forums, or subscribing to newsletters. A growing community is a strong indication that your DevRel efforts are resonating with developers and attracting new members.

- Engagement: Measure the level of interaction within the developer community, including forum posts, social media mentions, or event attendance. High engagement levels show that developers find value in your content and resources, fostering a more active and vibrant community.

- Technical content consumption: Monitor the usage of your technical resources, such as blog views, tutorial completions, and documentation page visits. This can help you understand which topics are most valuable to your audience and guide your content strategy.

- Developer satisfaction: Conduct surveys or collect feedback from developers to gauge their satisfaction with your products, resources, and support. High satisfaction levels indicate that you’re meeting the needs and expectations of your developer community.

- Product adoption: Track the number of new users, active users, or API calls made by developers. An increase in these metrics demonstrates that your DevRel efforts are contributing to greater product adoption and usage.

- Developer contributions: Measure the number of contributions made by developers, such as bug reports, feature requests, or pull requests. This can help you assess the impact of your DevRel initiatives on fostering collaboration and innovation.

- Internal advocacy: Evaluate how effectively your DevRel team is representing the developer’s voice within the company. This can include the number of product improvements or feature requests driven by developer feedback or the level of collaboration between your DevRel team and other departments.

- Event success: Assess the impact of your events, such as webinars, workshops, or conferences, by tracking attendance, feedback, or leads generated. Successful events will increase brand awareness, community engagement, and product adoption.

Remember that not all KPIs and metrics will be equally important for every organization or DevRel team…

…so, identify the key goals of your Developer Relations strategy and select the most relevant metrics to measure success. Regularly review and adjust your strategy based on your findings to ensure continuous improvement and alignment with your organization’s objectives.

How to find a developer relations job?

Here is a video I put together that will help:

Finding a Developer Relations job involves a combination of research, networking, and showcasing your skills and experience. Here are some steps to help you find a DevRel job:

Start by researching the industry. Familiarize yourself with the DevRel landscape, including the various roles, responsibilities, and skills required. Understand the differences between Developer Advocates, Developer Evangelists, and Community Managers, and determine which role best aligns with your interests and strengths.

Next, update your resume and online profiles. Be sure to highlight your relevant experience, technical skills, and accomplishments related to DevRel, such as public speaking, content creation, community management, or event organization. Ensure your LinkedIn profile, personal website, or portfolio showcases your expertise and passion for DevRel.

If you haven’t already, create a presence in the developer community. Actively participate in developer communities, forums, or social media platforms related to your area of interest. Share your knowledge, answer questions, and engage with other members to build your reputation and visibility.

It might sound a little cheesy, but network with DevRel professionals. Connect with DevRel professionals through social media, conferences, meetups, or online communities. Networking can help you learn about job opportunities, get advice, and gain insights into the DevRel job market.

As part of this, be sure to attend industry events. Attend conferences, meetups, workshops, hackathons, or webinars related to DevRel or your specific technology interests. These events can help you learn about the latest trends, network with professionals, and discover job opportunities.

Be sure to develop a content portfolio. Create and share technical content, such as blog posts, tutorials, or videos, to demonstrate your ability to communicate complex topics effectively. This will serve as proof of your expertise and communication skills, which are highly valued in DevRel roles.

Always start by leverage your existing network if you have connections. Reach out to your professional network, including colleagues, friends, or alumni, to inquire about DevRel job opportunities within their organizations.

If you don’t have connections, use job search platforms. Use job search platforms like LinkedIn, Glassdoor, Indeed, or specialized tech job boards to find DevRel job listings. Set up job alerts to receive notifications when new positions become available.

A great way to get started is to apply for internships or entry-level positions. If you’re new to DevRel, consider applying for internships or entry-level positions to gain experience and build your portfolio. This can increase your chances of landing a more specialized DevRel role later on.

Importantly though, tailor your application. When applying for DevRel jobs, customize your resume and cover letter to match the specific requirements and responsibilities of each role. Highlight your relevant experience, skills, and accomplishments to show your fit for the position.

How to prepare for a Developer Relations interview?

Preparing for a DevRel interview involves understanding the role, showcasing your technical knowledge, communication skills, and passion for engaging with developer communities.

Here are 10 tips to help you prepare for a DevRel interview:

- Research the company and its products: Learn about the company’s products, services, and technology stack. Understand the target developer audience and familiarize yourself with the company’s existing DevRel efforts, such as documentation, blog posts, and community forums.

- Review the job description: Carefully study the job description and note the specific skills, responsibilities, and requirements. Be prepared to discuss your experience and accomplishments related to these aspects.

- Prepare your portfolio: Compile a portfolio of your technical content, such as blog posts, tutorials, videos, or code samples. Be ready to discuss your thought process, challenges faced, and the impact of your work. If you have experience speaking at events or hosting workshops, mention those as well.

- Be ready to showcase your technical knowledge: DevRel roles often require deep understanding of the technology you will be advocating. Be prepared to answer technical questions related to the company’s products or your area of expertise.

- Practice storytelling: Develop clear and concise stories about your experiences engaging with developer communities, solving technical problems, or creating content. Use the STAR (Situation, Task, Action, Result) method to structure your answers and demonstrate your problem-solving, communication, and collaboration skills.

- Prepare for behavioral questions: Be ready to answer questions about your teamwork, conflict resolution, time management, and adaptability. DevRel roles require strong interpersonal skills, so interviewers will be looking for candidates who can work well with others and handle challenging situations.

- Demonstrate your passion for developer communities: Share your experiences participating in or leading developer communities, forums, or open-source projects. Highlight your enthusiasm for helping others, learning from peers, and staying up-to-date with industry trends.

- Prepare questions for the interviewer: Show your interest in the role and company by preparing thoughtful questions about the DevRel team, company culture, goals, and expectations. This will also help you determine if the position is a good fit for you.

- Practice your communication skills: DevRel roles require excellent communication skills, so practice speaking clearly and confidently. Consider rehearsing your answers to potential interview questions with a friend or recording yourself to identify areas for improvement.

- Follow up after the interview: Send a thank-you email to the interviewer, expressing your gratitude for the opportunity and reiterating your interest in the position. This can leave a positive impression and demonstrate your enthusiasm for the role.

All in all…a fun and rewarding job

So there we have it, a comprehensive overview of Developer Relations. If you want to up your game in DevRel, be sure to join the Bacon Bulletin, where I regularly send out valuable tips, tricks, and guidance for building incredible communities. It is totally FREE.

Building Community Engagement: Practical Activities, Metrics, and Tips for Success

Community engagement is the cornerstone of any successful online community, whether it be a community forum, Slack channel, or Discord channel.

Achieving meaningful engagements with your community members can be challenging, but it is essential for the growth and sustainability of your community.

This blog post will explore practical activities, metrics, and best practices for community engagement, focusing on various aspects of community engagement work, such as community engagement organizations, community engagement theory, and community engagement framework.

We will also touch upon community engagement opportunities and principles, as well as intentional communities looking for members.

Understanding Community Engagement

Defining community engagement can be complex, as it encompasses various elements such as community service, interaction, and contributions.

A general definition of community engagement is the process of working collaboratively with community members to address issues that affect the community, fostering partnerships and shared decision-making.

Community engagement examples can include volunteer opportunities, community events, and online discussions. The goals of community engagement are to build trust, increase participation, and create lasting, positive change.

Want a video overview? Check this one out:

Types of Community Engagement

There are several types of community engagement, ranging from passive involvement to active collaboration. These types include:

- Inform: Sharing information with community members to keep them informed about issues, plans, and opportunities.

- Consult: Seeking community members’ opinions and feedback on specific topics or proposals.

- Involve: Actively engaging community members in the decision-making process, usually through workshops, focus groups, or surveys.

- Collaborate: Partnering with community members to develop and implement projects, programs, or initiatives.

- Empower: Supporting community members in leading their initiatives, making decisions, and implementing solutions to issues they identify.

OK then, so how do you build engaging and drive these different types of engagement? Let’s explore…

Practical Activities for Community Engagement

Creating opportunities for community members to engage with your community is crucial. Some practical activities for community engagement include:

- Hosting virtual events, such as webinars, workshops, or panel discussions.

- Creating a community newsletter or blog to keep members informed about community news and events.

- Encouraging member-generated content, such as articles, videos, or artwork.

- Organizing volunteer opportunities or community service 101 initiatives to support local causes.

- Developing mentorship programs to connect experienced members with newer members.

- Creating subgroups, channels, or threads for specific topics or interests, fostering smaller, focused conversations.

The key here is experimentation…try lots of different ideas to explore different ways in which you can add value.

You should also check out this video which some more practical tips:

Community Engagement Metrics

I remember once being at an airport bar and I got chatting to a guy next to me. He said, “If you ain’t measuring it, it doesn’t exist.”

He wasn’t wrong.

Measuring the success of your community engagement initiatives is essential for improvement and growth. Some key metrics to track include:

- Active users: The number of members actively participating in your community (posting, commenting, reacting, etc.).

- Retention rate: The percentage of members who remain active in your community over time.

- Content contribution: The number of posts, comments, or reactions generated by community members.

- Event attendance: The number of community members attending your events or participating in your programs.

- Member satisfaction: The overall satisfaction of community members, usually gathered through surveys or feedback forms.

Depending on your community platform, be sure to measure all of these metrics to constantly evaluate how your community is growing.

Not just the how, the why: some best practices

The key to great engagement isn’t just about adding value, but it is how you participate. Make sure you focus on:

- Be inclusive: Ensure that all community members, regardless of their background, feel welcomed and valued. Actively seek out and address barriers to participation.

- Prioritize clear communication: Keep communication channels open, transparent, and accessible to all members. Provide information in multiple formats and languages, if necessary.

- Build trust: Develop genuine relationships with community members by being transparent, honest, and open to feedback. Show that you genuinely care about their opinions and needs.

- Foster collaboration: Encourage community members to collaborate with each other and with your organization. Promote a sense of shared ownership and responsibility for the community’s success.

- Continuously evaluate and improve: Regularly assess the effectiveness of your community engagement efforts using the metrics discussed earlier. Make adjustments as needed to better serve your community’s needs.

There are many ways to engage with your community, whether through events, programs, or communication channels.

Try some of the following:

- Organize a virtual town hall meeting to discuss pressing issues and gather community input.

- Host a community-wide contest, such as a photo competition, with prizes for the winners.

- Create a community calendar to keep members informed about upcoming events and opportunities.

- Establish a community ambassador program, where dedicated members help promote the community and welcome new members.

- Organize a hackathon or ideation session to tackle specific challenges within the community.

Challenges and Opportunities in Community Engagement

Building and maintaining an engaged community can be challenging, but there are also many opportunities for growth and development.

Some common challenges include:

- Member retention: Keeping members engaged and active over time can be difficult. Regularly evaluate your community engagement initiatives to address any issues and improve the experience for members.

- Diversity and inclusion: Ensuring that your community is welcoming and inclusive to all members can be an ongoing challenge. Continuously assess your community’s demographics, culture, and policies to identify areas for improvement.

- Time and resources: Managing a community and its engagement initiatives can be time-consuming and resource-intensive. Prioritize your efforts and seek additional support, such as volunteers or community engagement organizations, when needed.

One of the main goals of community engagement is to address the potentially negative issues that affect your community.

This requires you to be proactive in identifying and understanding the challenges your community members face.

Some approaches to addressing these issues include:

- Conduct regular community surveys to gather feedback and insights from members.

- Encourage open dialogue and discussions on pressing topics, creating safe spaces for members to share their experiences and perspectives.

- Collaborate with community members to develop and implement solutions to the identified issues.

- Seek external partnerships and resources to support your community’s efforts in addressing these challenges.

Celebrating Success and Recognizing Contributions

Acknowledging the achievements and contributions of community members is crucial for maintaining engagement and fostering a sense of belonging.

By celebrating success and recognizing the efforts of individuals and groups within your community, you can create a positive, supportive environment that encourages continued participation.

Some ways to celebrate and recognize your community members include:

- Share success stories and testimonials from community members on your communication channels, such as newsletters or social media.

- Organize awards or recognition ceremonies to honor outstanding contributions and achievements.

- Provide badges, certificates, or other tokens of appreciation to members who have made significant contributions to the community.

- Encourage peer recognition by creating channels or threads where members can express gratitude and appreciation for each other’s efforts.

In summary, building community engagement in a community forum, Slack channel, or Discord channel requires a strategic approach, practical activities, and ongoing evaluation.

By understanding the types of community engagement, implementing best practices, and leveraging the support of community engagement services, you can create a thriving, engaged community that addresses the issues affecting its members and celebrates their achievements.

Frequently Asked Questions

What is community engagement?

Community engagement is the process of involving community members in decision-making, problem-solving, and collaborative efforts to address issues that impact the community. In online forums and chat channels, it involves creating opportunities for members to participate, contribute, and connect with others.

Why is community engagement important for online forums and chat channels?

Because it fosters a sense of belonging, encourages active participation, and helps to create a vibrant, thriving community. Engaged members are more likely to contribute valuable content, share ideas, and support one another, ultimately contributing to the growth and success of the community.

What are the different types of community engagement?

There are several types of community engagement, including informing, consulting, involving, collaborating, and empowering. These types range from passive involvement (e.g., sharing information) to active collaboration and decision-making (e.g., partnering with community members to develop and implement projects).

How can I create an inclusive and welcoming environment for community members?

To create an inclusive and welcoming environment, ensure that all members feel valued, respected, and heard. Encourage diversity, establish clear guidelines for behavior, and proactively address barriers to participation. Provide accessible communication channels and be responsive to the needs and concerns of members.

What are some practical activities to increase community engagement?

Practical activities to increase community engagement include hosting virtual events, organizing community challenges or contests, encouraging member-generated content, creating subgroups or channels for specific interests, and providing opportunities for members to volunteer or contribute to community projects.

How can I encourage collaboration and interaction among community members?

Encourage collaboration and interaction by fostering a supportive and inclusive environment, providing clear guidelines for communication, and creating opportunities for members to work together on projects or discussions. Offer tools and platforms that facilitate collaboration, such as shared documents, project management software, or designated discussion channels.

What are some examples of successful community engagement initiatives?

Successful community engagement initiatives can include organizing virtual town hall meetings, hosting webinars or workshops, creating mentorship programs, developing a community newsletter, and establishing a community ambassador program.

How can I measure the success of my community engagement efforts?

Measure the success of your community engagement efforts by tracking key metrics such as active users, retention rate, content contribution, event attendance, and member satisfaction. Regularly evaluate these metrics to identify areas for improvement and make adjustments as needed.

What are some challenges associated with building community engagement?

Challenges associated with building community engagement include member retention, diversity and inclusion, time and resource constraints, managing conflict, and ensuring the relevance and quality of content and activities.

How can I address the challenges of maintaining an engaged community?

Address these challenges by continuously evaluating and improving your community engagement efforts, prioritizing inclusivity, providing clear communication and guidelines, seeking external support (e.g., community engagement organizations), and celebrating the achievements and contributions of community members.

What are the best practices for managing an online community?

Best practices for managing an online community include fostering inclusivity, prioritizing clear communication, building trust, fostering collaboration, and continuously evaluating and improving your community engagement efforts.

How can I create a sense of ownership and shared responsibility among community members?

Encourage a sense of ownership and shared responsibility by involving community members in decision-making, providing opportunities for members to lead projects or initiatives, and promoting collaboration and support among members.

How can I ensure that my community remains relevant and engaging over time?

Keep your community relevant and engaging by staying informed about members’ interests and needs, adapting your content and activities accordingly, and continuously seeking feedback and input from members.

How can I effectively moderate my online community to maintain a positive environment?

Moderate your community effectively by establishing clear guidelines for behavior, consistently enforcing those guidelines, and providing channels for members to report any violations or concerns. Train moderators to handle conflicts and issues in a fair and respectful manner, and create a transparent process for addressing any disputes.

How can I leverage community engagement services or companies to support my efforts?

Community engagement services or companies can offer expertise, resources, and support to help you manage and grow your community. They can assist with strategy development, moderation, analytics and reporting, and training or workshops.

What role do community engagement organizations play in supporting online communities?

Community engagement organizations can provide guidance, resources, and support to help online communities build and maintain engagement. They can offer expertise in best practices, strategy development, and implementation, as well as support for specific initiatives or projects.

How can I use events to foster community engagement?

Events, such as virtual town halls, webinars, workshops, and contests, can provide opportunities for members to connect, learn, and contribute to the community. Promote and facilitate event participation by providing clear information, accessible platforms, and opportunities for interaction and collaboration.

How can I promote diversity and inclusion within my online community?

Promote diversity and inclusion by actively seeking and welcoming members from diverse backgrounds, addressing barriers to participation, and creating a safe and supportive environment for all members. Encourage diverse perspectives and provide opportunities for members to share their experiences and insights.

How can I encourage members to contribute content and ideas to the community?

Encourage content contribution by creating a supportive environment, providing clear guidelines and expectations, and offering incentives or recognition for quality contributions. Provide platforms and tools that facilitate content creation and sharing, and actively promote member-generated content within the community.

What are some ways to recognize and reward community members for their engagement and contributions?

Recognize and reward community members through public acknowledgment (e.g., in newsletters or social media), awards or recognition ceremonies, badges or certificates, and peer recognition channels. Offer tangible incentives or prizes for contests or challenges to motivate participation.

How can I effectively handle conflict and negative behavior within my online community?

Handle conflict and negative behavior by establishing clear guidelines for behavior, consistently enforcing those guidelines, and providing channels for members to report any violations or concerns. Train moderators to handle conflicts and issues in a fair and respectful manner, and create a transparent process for addressing any disputes.

What are some tools or platforms that can help facilitate community engagement?

Tools and platforms that can facilitate community engagement include project management software, collaboration tools, shared documents, communication platforms (e.g., Slack, Discord), and event hosting platforms (e.g., Zoom, Webex).

How can I use analytics to inform and improve my community engagement efforts?

Use analytics to track key engagement metrics (e.g., active users, retention rate, content contribution), identify trends, and uncover areas for improvement. Regularly evaluate these metrics to inform your community engagement strategy and make adjustments as needed.

How can I create a sense of community identity and pride among members?

Foster community identity and pride by developing a shared vision and values, promoting community achievements and successes, and encouraging members to contribute and collaborate on community projects and initiatives.

How can I encourage members to take on leadership roles within the community?

Encourage leadership by providing opportunities for members to lead projects, initiatives, or subgroups, offering mentorship or training programs, and recognizing and promoting members who demonstrate strong leadership qualities.

What are some ways to maintain member interest and engagement over time?

Maintain member interest and engagement by continuously adapting your content and activities to align with members’ interests and needs, seeking feedback and input from members, and providing opportunities for members to connect, learn, and grow within the community.

How can I ensure that my community remains a safe and respectful space for all members?

Ensure a safe and respectful space by establishing and enforcing clear guidelines for behavior, addressing any violations or concerns promptly and fairly, and providing channels for members to report issues or conflicts. Create a culture of respect and support by fostering open communication and promoting inclusivity.

How can I involve community members in decision-making and problem-solving?

Involve community members in decision-making and problem-solving by creating opportunities for input and feedback, such as surveys, polls, or discussion forums. Encourage collaboration on projects or initiatives, and provide platforms and tools that facilitate group problem-solving and decision-making.

How can I leverage partnerships and external resources to support my community engagement efforts?

Leverage partnerships and external resources by seeking collaborations with organizations or individuals who share your community’s goals and values, tapping into external expertise and resources, and exploring grant or funding opportunities to support specific community projects or initiatives.

How can I continuously improve and adapt my community engagement strategy to meet the evolving needs of my community?

Continuously improve and adapt your community engagement strategy by regularly evaluating your engagement metrics, seeking feedback and input from community members, staying informed about new trends and best practices, and being open to change and innovation. Use this information to refine your strategy and make adjustments as needed to better serve your community’s needs.

5 Things I Would Do To Fix Twitter

So, Elon Musk has purchased Twitter.

I don’t really want to get into the politics of whether this is a good or bad thing (other people are already debating this), but it got me thinking about what needs fixing in Twitter.

There is little doubt that Twitter has a number of challenges facing it with bad behavior, trolling, misinformation, and more…and much of these problems stem from a broken incentive model.

So, I decided to put together a video with five practical recommendations of what I would focus on if I was in Elon’s position to deliver practical improvements to how Twitter works.

I cover solutions including:

- Building a better incentive model that focuses on reading, content, collaboration, and more.

- Integrating citations into Community Notes to provide clarity around news-related topics.

- While people can pay $8 to be verified…provide a pathway to earn verification at no cost (especially important for low-income users, students, etc.)

- Creating better features for content creators (and raising visibility of content based on key metrics.)

- Opening up how the Twitter algorithm works.

Check it out: ?

Should you use Facebook Groups for Your Community?

Yeah…yeah…I get it…Facebook…

…even people who use Facebook don’t seem to be huge fans of Facebook as a company…but let’s put that to one side for a moment.

Thousands of companies, interest groups, support groups and more use Facebook Groups every single day, so, here is the question: are Facebook Groups a good platform for building an awesome community?

You know what, it is a fair question.

There are a billion people on Facebook, and Facebook Groups are totally free…so does it make sense to dip your fishing rod into the communal fish pond (so to speak?)

Well, unlike people on Twitter complaining endlessly about anything to do with Facebook (or Meta), I decided to remove emotion from the equation and explore if Facebook Groups are any good.

In my video I explore the good and bad of Facebook Groups covering features, capabilities, insights, data, notifications and more…while also demoing a live community in a Facebook Group.

Finally, I will let you know what I would do if I was in your shoes and whether I would use Facebook Groups for a community…

Spoiler alert: there is a lot to like, and there are some things that really suck…give a watch and let me know what you think!

How Developers Stay up to Date in 2022

Developers are a really important demographic for a lot of companies. Front-end, back-end, QA, cloud architects, mobile devs…every tech company needs clarity on how to engage with developers.

But…how do you know how developers like to stay up to date? How can you be a voice in the places they are listening?…

…well, I reached out to my friends at SlashData and they shared some super-interesting 2022 data that shines a light on how thousands of developers have chosen how to stay up to date.

I not only walk through this 2022 to help you to make sense of it…but I also provide some super-practical recommendations of how you can build engagement with developers TODAY.

You should definitely check this out if you are:

- Wanting to engage more effectively with developers…

- Want to better understand developer habits and interests…

- Want to reduce the amount of content that doesn’t get seen…

- Want to optimize your overall developer engagement and developer relations…

Check it out:

Also, be sure to check out my other video sharing the 2022 data for how developers like to learn and problem-solve here:

How Developers Consume Content in 2022

Developers are in high-demand for technical companies and communities, but getting developer attention is harder than ever before…

…so, a lot of companies and communities create enormous amounts of content to attract developers…but a lot of it doesn’t get any traction.

Bummer.

So, how do you figure out how to create content that developers will love?

Well, we start with data.

I reached out to my pals at SlashData and asked them if they had some insight on how developers like to learn and solve problems…and boy did they deliver…

They sent me data from surveying thousands of developers that is enormously helpful in helping to guide how we structure and developer content developers will love.

So, I put together a video I walk through:

- An overview of the data from Slashdata that sheds light on what kinds of content developers prefer for learning new technologies and problem solving.

- An analysis of these trends and what it means for you (especially how it impacts your community platforms.)

- Based on this data, I recommend 3 practical things you can do today to optimize how you create content for developers.

Where you work in AI/ML, cloud, mobile development, game development, open source, infrastructure, web3, or any other industry where you need to grab developer attention…this video is a must watch.

Check it out:

This data is powerful, so be sure to share it with others who are building developer enagement.

How to Deal with Internet Trolls

Did you know that 5.6% of people self-identify as Internet trolls?

This isn’t particularly surprising…but there is a broader challenge…

…I believe that a much larger group of people are not Internet trolls…but they are generally anti-social when online.

This still presents a problem for us…how do you deal with these people so you can not only limit their damage and manage them…but also so you can sift out useful feedback from the noise?

Well, I decided to put together a quick video guide.

In it I cover:

- The science of Internet trolls and the “dark triad” of personalities.

- How to judge if and how you should respond…with examples.

- How to “Stay Classy” when dealing with difficult people.

You can think of this video as your go-to-kit for dealing with the difficult. Check it out:

Dean Baratta On Intelligence and Security

Dean Baratta comes on to talk about his fascinating career in working in intelligence, security, and protection.

Communities are changing the way we do business. Discover a concrete framework for building powerful, productive communities and integrating them into your business. My new book, ‘People Powered: How communities can supercharge your business, brand, and teams’, is out now, available in Audible, Hardcover, and Kindle formats.

As usual, thank you to the fantastic Marius Quabeck and NerdZoom Media for mixing the show!